Introduction to Embedded Electronic Displays

In this article you’ll learn all about the different types of displays commonly embedded into electronic devices.

If your new product requires an electronic display, then it is essential that you select the right type of display.

Your choice of a display directly impacts your product’s user experience simply by being one of its most visible aspects. A display is also likely to be one of the most expensive components in your product, and also one of the most power hungry.

So let’s look more closely at how you can balance various tradeoffs in order to select the display technology that is best for your product.

Display Basics

Before we proceed there are a few technical terms you need to understand in order to select the best display.

Most displays are classified as reflective, transmissive, or emissive. Reflective displays depend on light reflected from the front of the display. The background of such displays are usually reflective, with the displayed pattern selectively blocking the reflection.

Transmissive displays depend on allowing or blocking a backlight to display an image. The backlight is normally white, and each pixel actually consists of three sub-pixels that can selectively pass the Red, Green, and Blue part of the white backlight.

Emissive displays actually emit light on their own. Each pixel emits its own light in the three primary R, G, B colors. By varying the intensity of emitted light, full color images can be created.

Color gamut is a measure of how broad the range of available colors are in a display. Monochrome displays, on the other hand, have only one available color.

Full color displays typically consists of three primary colors – Red, Green and Blue (RGB). Various combinations of these primary colors with various intensities can reproduce realistic looking full color displays.

Color depth specifies the number of intensity levels for each color component. A color depth of N, means that each color can be displayed at N different intensities.

For example, an RGB display, with each color having a color depth of N, can thus have N x N x N possible color combinations. The simplest color depth is two. That is, a segment, or a pixel is either full ON, or full OFF.

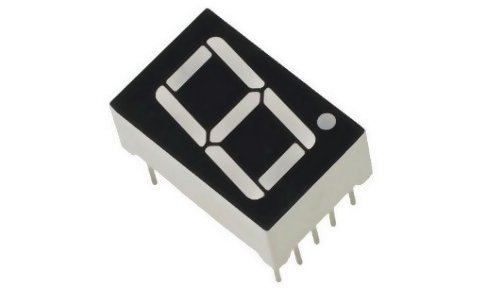

Simple Segmented Displays

If you need to simply display some numbers and, say, a small set of letters, then you should consider a segmented display. Typical examples of segmented displays are found in digital clocks.

Each digit is made up of seven segments that can be individually turned on or off. It can display the number 0 to 9, and some letters such as A, C, E, F, H, h, L, P, r, t, and U.

Some segmented displays have more than seven segments, and can thus display a wider range of patterns.

Typically, these types of displays use LED’s (Light Emitting Diodes) for each segment, and their color is commonly red. However, segmented LCD displays are also available. A picture of a typical seven-segment LED display is shown in Figure 1 below.

Table 1 below summarizes the characteristics of simple segmented displays.

| Characteristics | Ratings | Notes |

| Color gamut | Monochromatic | Usually red. |

| Color depth | 2 | A segment is either ON or OFF. |

| Display type | Emissive | Can be read in sunlight or subdued light. |

| Update speed | High | Digits can be updated very fast. |

| Temperature range | High | Operates over a wide temperature range. |

| Interface complexity | Medium | Even with the proper driver chip, such displays can use lots of processor IO lines to properly address. |

| Power consumption | Medium – Variable | Depends on the number of segments that are currently turned on. |

| Size | Variable | Can be from about 0.2” tall to several inches tall. |

| Cost | Low – Variable | Almost proportional to the number of digits being used. |

Table 1 – Segmented displays at a glance

Alphanumeric Displays

The next level of functionality in displays is an alphanumeric display. This display consists of one or more rows of character cells. Each character cell actually consists of a fixed-size array of pixels that can display predefined, and some user-defined, characters or symbols.

There can be many such character cells per row. Displays of this type are 16 x 1, 16 X 2, 24 X 1, 24 x 2, 16 X 4 and others. These refer to the number of such cells in the display. For example, a 16 X 2 display has two rows of sixteen character cells each.

The most commonly available displays of these types are reflective LCD’s (Liquid Crystal Display). A picture of an LCD alphanumeric display is shown below in figure 2.

Table 2 below gives some important characteristics of alphanumeric displays.

| Characteristics | Ratings | Notes |

| Color gamut | Monochromatic | Can be gray, white, or yellow on various colored backgrounds. |

| Color depth | 2 | A segment is either ON or OFF. |

| Display type | Reflective | Can only be read in bright light. Some displays also have small front mounted side lights. |

| Update speed | Low | Display cannot be updated very fast without the user seeing some visual artifacts. |

| Temperature range | Medium | Display can become sluggish at low temperatures. |

| Interface complexity | Medium | Display uses at least six or more IO lines to properly address. |

| Power consumption | Low | Front side light, if used, can significantly add to the power consumption. |

| Size | Variable | Available in a wide variety of sizes. Can be very small or up to 6” wide or more. |

| Cost | Low – medium | Reasonably low cost. |

Table 2 – Alphanumeric displays at a glance

Graphics Displays

Displays in this category consist of individually addressable pixels. They are capable of displaying anything from simple text to complete static, from animated images to full motion video.

Unsurprisingly, they cost more than displays in the previous categories. Even so, there are quite a few varieties with varying cost-benefits ratios. This section will explore a few of the most commonly available varieties.

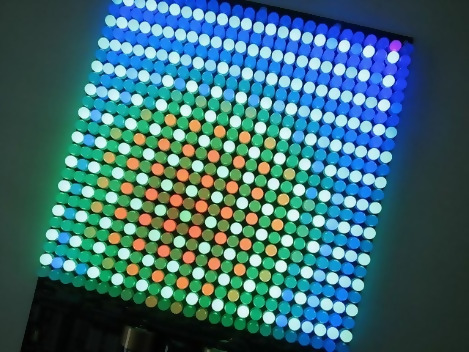

LED matrix display

The LED matrix display consists of a matrix of discrete LED’s that can be individually addressed. This type of display exists in monochrome, multicolor, and full color varieties.

The pixel density, or dots per inch (DPI), of this display is very low. This display is best viewed from a distance; otherwise the individual pixel will be distinctly visible.

A picture of a full color LED matrix display is shown in figure 3.

Table 3 below summarizes the characteristics of LED matrix displays.

| Characteristics | Ratings | Notes |

| Color gamut | Monochromatic, multicolor or full RGB | Can be only one color, a small set of colors, or any color in the case of full RGB. |

| Color depth | 2 to 64 typically | Can display a limited range of grayscales. |

| Display type | Emissive | Can be read in any light conditions. |

| Update speed | Low | Low speed is mostly due to available controllers, not the LED themselves. |

| Temperature range | Medium | Display can become overheated at higher temperatures. Active cooling (usually fans) is then required. |

| Interface complexity | Medium | May require a lot of IO lines depending on the size of the display. |

| Power consumption | High | Depends on how many pixels are lit, but typically high. |

| Size | Variable | A wide variety is available up to arena size. |

| Cost | Medium High | Depends on size and pixel resolution. |

Table 3 – LED matrix displays at a glance

E-ink displays

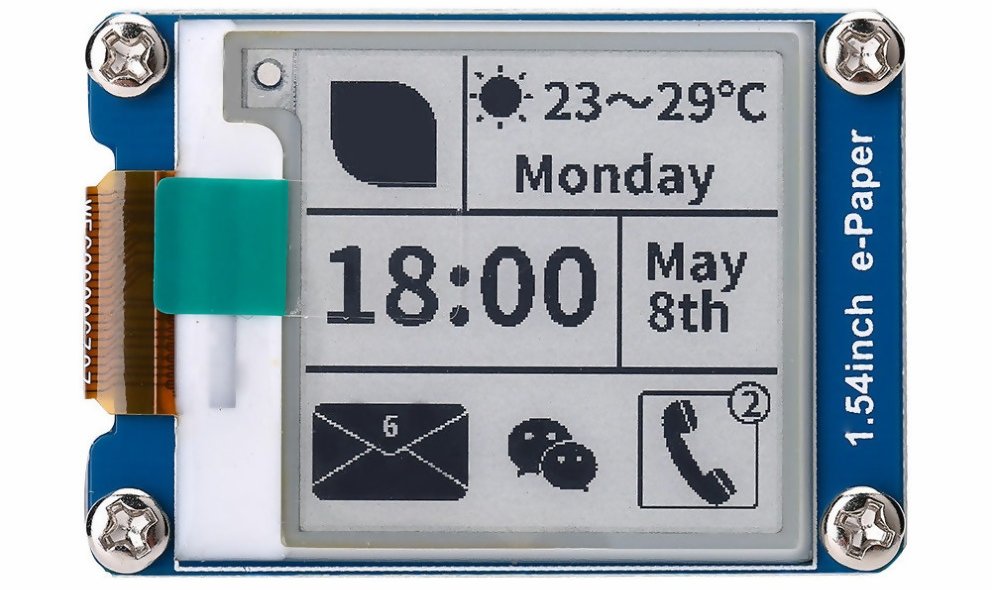

E-ink, or e-paper, displays are very low power displays suitable for specific applications where fast update speed is not required. They are available in a variety of sizes. Typically they are monochrome, but some can display limited color combinations.

Since they use a reflective technology, these displays are best viewed in bright light environments.

One unique characteristic of this display is that once rendered, the image stays on the screen, even if power is turned off. In certain applications this can allow for extremely low power consumption.

Figure 4 shows a typical small monochrome e-ink display.

Figure 4 – Small e-paper display

Table 4 below gives some important characteristics of E-Ink displays.

| Characteristics | Ratings | Notes |

| Color gamut | Monochromatic to multicolor. | Only one color or a small set of colors. |

| Color depth | 2 to 16 typically | Can display a limited range of grayscales. |

| Display type | Reflective | Can only be read in bright lights. |

| Update speed | Very Low | From half a second to more than four seconds, depending on the model. |

| Temperature range | Low | Most e-ink displays do not work well below the freezing point. |

| Interface complexity | Low | Models with SPI or I2C are available. |

| Power consumption | Very low | Once rendered, this display uses no power to keep what’s on the screen. |

| Size | Variable | A wide variety is available from about 1.2” to more than 40” in diagonal length. |

| Cost | Medium High | This display technology is relatively new, and can cost quite a bit depending on features required. |

Table 4 – E-paper displays at a glance

There are two standard serial communication protocols commonly used to interface with moderately complexity displays.

I2C is a relatively low speed, but simple, interface used by some displays. Only two wires, plus a return wire, are needed for this interface, and data is sent out pixel by pixel.

SPI is a medium speed interface used by some displays. Usually three to four wires, plus a return wire, are needed for this interface. While data is also sent pixel by pixel, it is generally faster than the I2C bus.

OLED displays

OLED (Organic LED) displays are somewhat similar to the LED matrix displays described previously, except that the pixel density, or DPI (Dots Per Inch), can be very high.

This is due to the fact that the Organic LED technology is quite different from regular LED’s, and this allows a manufacturing process that can produce densely packed pixels.

Note that there are two sub-kinds of OLED displays: passive matrix and active matrix OLEDS, respectively abbreviated PMOLED and AMOLED.

In PMOLEDs, each subpixel, i.e. the R, G, and B sub-pixels, is located at the cross junction of a matrix of control lines running horizontally (in rows) and vertically (in columns). Controlling a pixel then involves energizing the appropriate row and column lines.

Figure 5 below shows a small PMOLED display.

In a PMOLED drive scheme, as soon as a given row/column is no longer energized, the OLED turns off at its junction.

Therefore, each pixel only gets a fraction of the total time required to refresh the entire display area. Hence this OLED drive scheme is usually limited to fairly low resolution screens.

In AMOLEDs, each color sub-pixel is individually controlled by a transistor acting like an electronic switch. The fabrication process is more complicated than the PMOLED, but the display is faster and more uniform.

In general, low resolution OLEDs are PMOLED and higher resolution OLEDs are AMOLED. Most modern high-end smartphones offer AMOLED displays.

Table 5 below gives some of the more critical characteristics of OLED graphics displays.

| Characteristics | Ratings | Notes |

| Color gamut | Monochromatic, multicolor or full color | Can be only one color, or a small set of colors, or full color. |

| Color depth | 2 to 256 and more per primary color | Simple displays can be black and white. However, the most sophisticated of these displays can reproduce millions of colors. |

| Display type | Emissive | Can be viewed in sunlight or in the dark. |

| Update speed | High | Suitable for videos or full speed animations. |

| Temperature range | High | Can be used in a wide range of ambient temperatures. |

| Interface complexity | Low to high | Models with SPI or I2C are available for the simpler displays. High resolution displays have sophisticated interfaces such as a digital parallel interface or MIPI. |

| Power consumption | Medium – Variable | Power consumption depends on number of pixels illuminated. |

| Size | Variable | A wide variety is available from about 1.2” to more than 40” in diagonal length. |

| Cost | Medium High | This display technology can cost quite a bit depending on features required. |

Table 5 – OLED displays at a glance

More complex displays will typically interface via either a digital parallel port (sometimes called a Digital Video Port or DVP), or a specialized serial port called Mobile Industry Processor Interface (MIPI).

MIPI is a standard high speed interface that is widely used in modern consumer electronics including smartphones, tablets, and laptops. The advantage of MIPI over DVP is that fewer pins are required.

Note that MIPI is typically only supported by more advanced microprocessors, and is not available on most microcontrollers. One exception is the STM32F469 microcontroller from ST Microelectronics that does offer a MIPI display interface.

LCD displays

The last category of graphics displays is the LCD. These displays can have very high pixel densities, and can display a full range of colors with full motion capabilities.

They are also available in a wide range of sizes from 2” up to high definition TV’s with a diagonal length of 100”.

This performance makes driving them quite complicated, and dedicated controller chips are needed for anything but the simplest models. Also note that many small to medium size LCD displays are also available with a touchscreen overlay.

As with OLED displays, LCD displays are available in both passive and active matrix versions.

An active matrix LCD is typically implemented using a Thin-Film-Transistor (TFT) technology. To manufacture a TFT display a thin film of silicon is deposited on a glass panel to form the transistors.

Table 6 below shows some of the characteristics of LCD displays.

| Characteristics | Ratings | Notes |

| Color gamut | Full color | Most are a full range of colors. |

| Color depth | 2 to 256 per primary color | Simple displays can be monochrome. However, most of these display can reproduce millions of colors. |

| Display type | Transmissive | Can be viewed in subdued sunlight or in the dark. Requires a backlight. |

| Update speed | Medium high | Suitable for videos or full speed animations. |

| Temperature range | Medium high | Can be used in a wide range of ambient temperatures. |

| Interface complexity | Low to high | Models with SPI or I2C are available for the lower resolution displays. High resolution displays have sophisticated interfaces such as digital parallel interface, HDMI or MIPI. |

| Power consumption | Medium – Variable | Power consumption depends mostly on the type and intensity of the backlight, and the display controller. |

| Size | Variable | A wide variety is available from about 1.2” to more than 100” in diagonal length. |

| Cost | Medium High | This display technology can cost quite a bit, mostly depending on size. |

Table 6 – LCD displays at a glance

Conclusion

The display you choose for your product is one of the most important design decisions you will make.

Not only is the display the component that your users will interface with the most, but it also happens to commonly be the most expensive and most power hungry component in a product.

When choosing a display it is best if you first narrow your choices down to one type of display. Once you have the display type selected then you can fine tune your selection with a specific display model to meet your requirements.